What hosts do to ensure top site performance and help improve search engine rankings

Generally speaking, good design lies at the heart of any successful website.

However, neither the best web design layout nor the most reliable framework solution could make up for the glitches of the underlying web hosting service.

You may have the best-looking site on the web, but if it suffers from frequent ‘hiccups’ (‘hiccup’ is a slang word for ‘downtime’), then it will, instead, look ‘sick’ to your visitors.

We’ve tried to summarize the major responsibilities of a decent web hosting provider.

What are the main responsibilities of a host?

Good web hosting can be defined in 3 words – uptime, performance and security. They all complement each other and serve as a criterion for defining the reliability of a given host.

And you know what? Google thinks the same. Over the last few years, the leading search engine has added uptime, page loading speed and security to its ranking algorithm and uses them to define the reliability of a given website.

So, the more dependable the host is, the more reliable the site will appear in the eyes of both visitors and search engines. What’s more important than that?

1. Server Uptime

Downtime is an inevitable scenario in the web hosting industry due to the still hard-to-root-out probability of a hardware failure. This is why, minimizing downtime in order to provide a decent amount of service uptime is the primary concern of hosting providers.

You can recognize a reliable host by the amount of uptime they are able to provide. The uptime is so important that Google has added it to its ranking algorithm (check out the 200 ranking factors laid out by Backlinko):

Maintaining a decent uptime, however, requires a good balance between hardware and software, as well as constant maintenance and upkeep.

For instance, the hosting provider may have ensured a perfect hardware configuration, but if they haven’t coupled it with software that is well-optimized and thoroughly tested to prevent edge cases, then the stability and performance will be at constant risk of being compromised.

The reverse case holds true as well – the host may have made the best software selection, but if the underlying hardware is faulty, then the site’s availability will be compromised as well.

To minimize faulty hardware occurrences, hosts usually invest in enterprise-grade hardware that is capable of handling critical loads.

As an extra measure to cement high uptime levels, hosts also focus on providing hardware/software/network redundancy.

For example, on our platform, we have deployed the RAID 10 technology, which allows data to be mirrored on another disk and used in the event of a server disruption.

Software redundancy is ensured by an alternative redundant DNS service, through which DNS traffic could be re-routed in the event of a problem.

For instance, we’ve got name servers on different continents, so if a given domain stops resolving, the DNS traffic associated with it will be immediately handled by another data center.

Also, our admins are constantly working to implement network and power path redundancy within each data center in order to ensure an impeccable 99% uptime guarantee:

2. Loading Speeds

The fast page loading speed is another search engine ranking factor hosts have a key part to play in ensuring (it improves user experience and helps increase conversion rates):

By securing a balanced hardware-software selection as explained above, hosts can guarantee top website performance.

The choice of top-class server drives will have a speed-boosting impact on sites and applications as long as they are coupled with an up-to-date web server and an adequate Operating System instance, and vice versa.

A growing number of hosts now convert to SSD-based storage, which boasts much faster data reading/writing speeds and guarantees higher website availability and accelerated application performance.

Also, we use ZFS LZ4-based data compression to store content on our platform, which speeds site delivery noticeably in comparison with sites stored on mainstream EXT4-based hosting platforms like cPanel, for example.

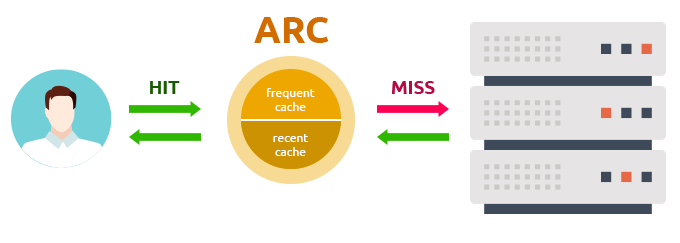

Plus, we deploy ZFS’s very fast ARC cache, which is located in the server’s RAM. It allows for frequently accessed data to be served directly from the ARC memory cache instead of ‘bothering’ the server, the result being a noticeable performance boost:

3. Platform Security

Keeping websites in a secure environment is essential for each web hosting provider. The tighter the security measures are, the lower the chance for a website to be broken into or a server to be compromised will be.

Taking into account that frequently compromised sites hurt user experience, Google has taken special measures to warn both site owners (through Google Search Console warnings) and users (by means of browser notices) of malware detection. This is a signal that may well be linked to the fact that the search engine keeps a more critical eye on frequently compromised sites when assessing a site’s ranking potential:

This is why, hosts put a lot of effort and resources into reinforcing the security of their platforms on a server level.

They offer secure SSL/TLS-protected login areas and avoid storing passwords in plain text.

To ensure efficient anti-virus protection of the server, hosts invest in enterprise-level solutions.

DDoS protection is a key security provision prerequisite aimed at preventing massive hacker attacks, which are so common in today’s web environment.

On our platform, we also deploy rootkit scanning to prevent any unauthorized root-level access to the server.

To keep your content intact, rain or shine, we run multiple backups per day.

4. Server Location

Technically speaking, the closer to its visitors а given site is hosted, the better the browsing experience will be.

For instance, an Australia-based site targeted at visitors from the region will achieve better performance levels as compared to a site hosted in the United States, for example.

Google knows that too and has hence given preference to sites with a local server IP address in native search results:

Content Delivery Network (CDN) solutions like CloudFlare try to bridge the location gap between data centers and target audiences by means of caching and network routing.

Their main purpose is to neutralize the location factor by improving delivery speeds.

However, CDNs work best for passive content mainly. As far as dynamic queries are concerned, oftentimes the CDN does not manage to serve all of them from the cached resources and sends some back to the location server, which has a reverse effect and slows down the given site’s performance.

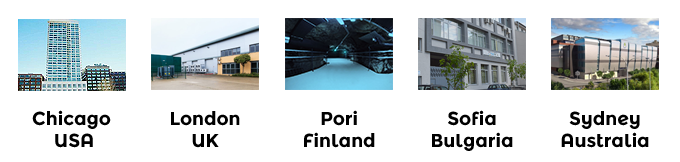

This is why, location is still a major factor in defining website performance, which explains why hosts offer a wide selection of data center choices to their customers.

We offer data center locations on 3 continents for you and your customers to choose from:

5. 24/7 support

No hosting provider can pretend to be delivering high quality to their customers without the presence of a decent customer support service to back it up.

The host may be doing a great job maintaining a good server uptime, but if the customer has a local site problem, the host’s tech support team reps should be the ones to diagnose the problem and help the user get the site back online.

For instance, on our platform, we offer help for compromised sites to help users get them back to normal as fast as possible.

It’s the same with page loading speeds – the web server may offer perfect writing/reading speeds, but if the site owner faces problems with non-optimized scripts or non-updated CMSs, then it’s the host’s job to offer advice.

For example, we offer the following support channels:

Go the extra mile: dev-friendly solutions offered by hosting providers

Yes, maintaining a decent server uptime, fast loading speeds and high levels of security takes up loads of time.

However, providing a dev-friendly environment can well be complemented with tools and functionalities that will save developers both efforts and time.

Here is a list of some of the dev-oriented tools you can find in the Web Hosting Control Panel. It comes with our shared hosting packages and semi-dedicated servers and is offered as a free upgrade with our Virtual Private Servers and dedicated servers:

Control over PHP settings – devs can select the PHP version they have optimized their projects for straight from their Control Panel. We regularly update all PHP versions that are in circulation, including the latest PHP 5.6 and PHP 7 releases.

Supervisor – this recently added tool will help devs run background processes like setting up a chat server over the TCP-based WebSocket protocol or installing a Ruby on Rails server in their hosting accounts.

PHP Opcode Caching – using the APC (Alternative PHP Caching) framework, devs who manage applications that consist of a large source code base can achieve a 3-time page generation speed increase.

Web accelerators – speeding up page delivery has been made easy with the integration of data caching solutions like Memcached and Varnish into the Control Panel.

Building data-intensive real-time applications has been streamlined with the integration of Node.js.

Anti-hack firewall – securing web applications like WordPress and Joomla has been made easy with the implementation of ModSecurity. Users can decide whether to keep it ON or OFF (or in Detect mode for specific applications).

Outgoing Connections – this tool allows users to open outgoing connections to certain IPs or IP ranges, depending on the purpose of their applications.

IP Blocking – devs can easily block attacks from certain abusers and protect their web projects by denying access to a specific IP address/IP range.

***

When searching for a reliable web hosting provider, site owners should be armed with knowledge in order to make the best choice and to avoid going back and forth with a non-responsive service.

Apart from providing a fast, reliable and secure service, reliable hosts should also think of ensuring a fruitful environment where developers could structure their efforts to deliver top visitor experience and to help ensure higher search engine placement.

Originally published Tuesday, May 3rd, 2016 at 2:24 pm, updated July 8, 2024 and is filed under Web Hosting Platform.Tags: web hosting, online security

Leave a Reply